So after reading the Bananas Diet post we are now familiar with the causality bias concept. Another example of causailty bias i came across a few years ago for the first time is the black car crashes one.

Articles like this one show that based on data anyone can conduct a study, but usually the derived insights confuse correlation and causality.

This is a clear example. Data does not lie: the most common car color involved in car accidents is black. So is the color causing the accident? Is eating ice cream causing sun-borns?

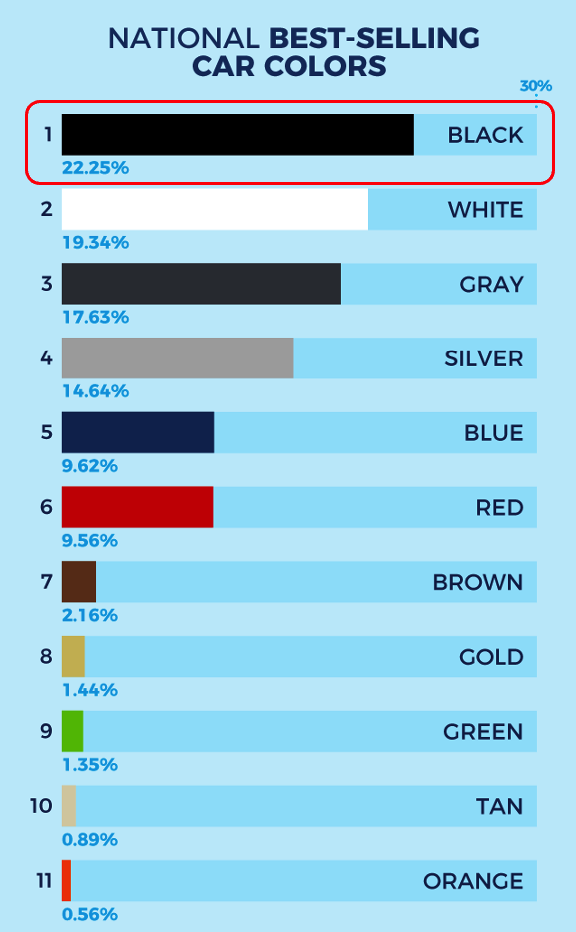

The idea that a car color could have an effect on driver’s ability to see the veichle is somewhat affascinating, but since at night we all use headlights, i don’t think it’s the reason for black being the riskiest color. So what could be the casue? let’s first ask ourselves: what’s the most popular car color? Maybe it’s popularity could be one factor…

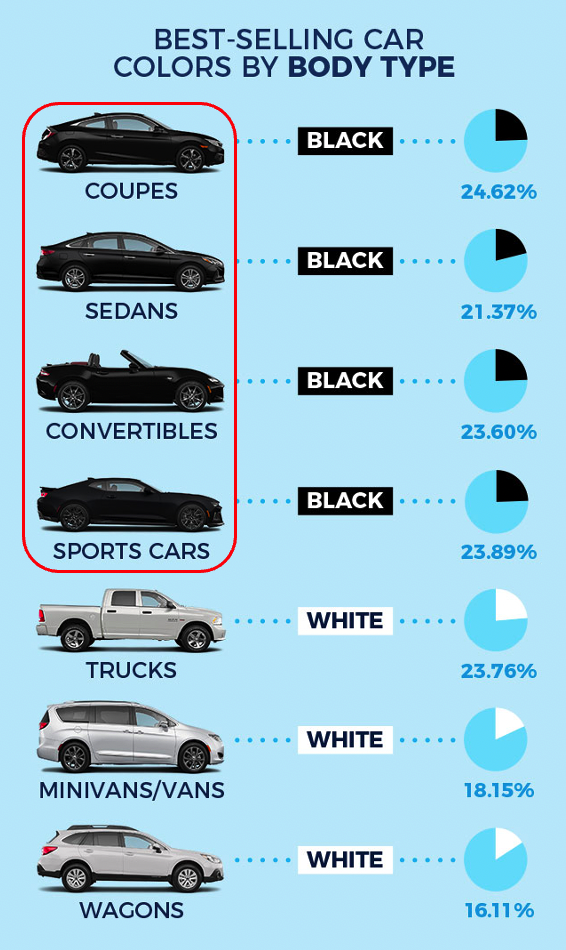

So it’s somewhat obvuous: the more black cars are circulating, the more likely those cars will be involved in car crashes. But the article does not talk only about an absolute number of black cars involved in car crashes, it talks about the percentage of crashes that is higher with black color cars. So maybe just explaining the higher risk with the higher number of black veichles is not enough. Let’s search a little more then. What about the model of the cars? For example: are black cars more sporty or more family cars?

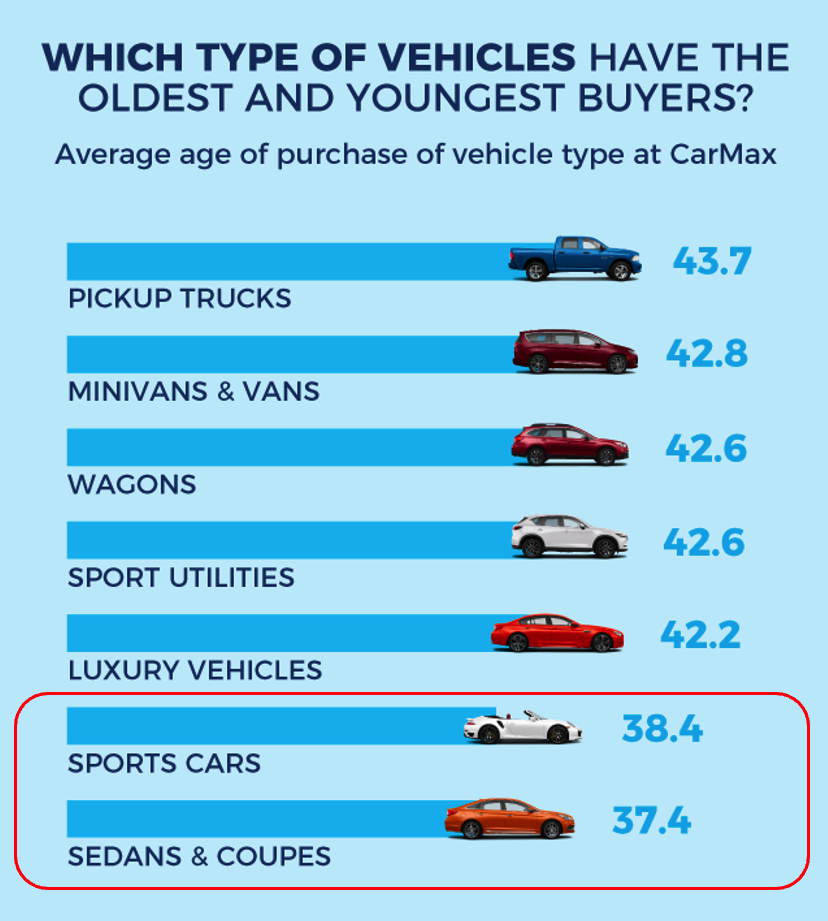

As we can see now we have some more data to be analyzed: among all colors, it’s more likely that a black car is faster. And i think we can all agree that higher speed usually is related to higher risk of accidents. But we should not stop here. How about the drivers? Who drives black cars? how much are those drivers experienced?

If we look for the average driver’s age, sport cars, sedans and coupes (which are more likely to be black) are also driven by the youngest age-group (38 is the average, lowered a lot by the newbies that have much less experience driving).

So in my opinion the causality for black cars being more risky is a combo of factors: faster cars, younger drivers. Perfect storm. After all, is it more likely that the causality of car crashes is the color itself that for some reason has an effect on other driver’s perception of the black veichle or is it due to the type of car, which is more powerful and the less-experieced driver?

By the way, this is a well known principle for judging hypothesis: the Occam’s razor, Ockham’s razor, or Ocham’s razor (also known as the principle of parsimony or the law of parsimony). But this topic needs to be treated in a dedicated post.

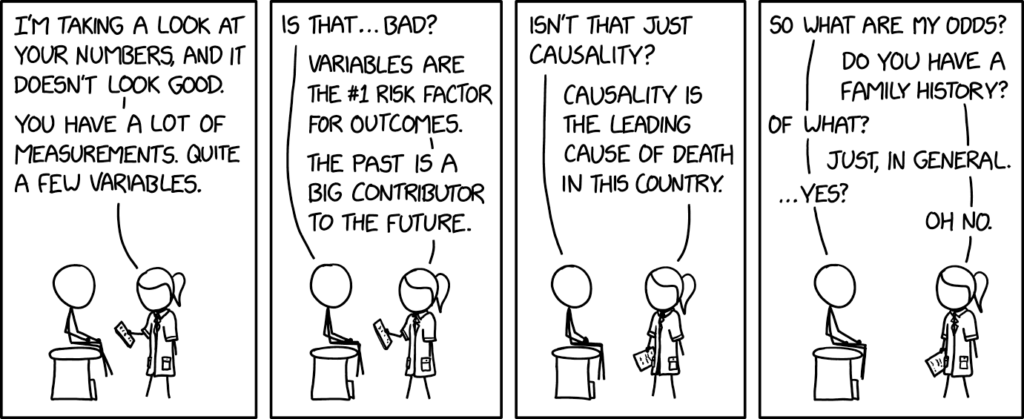

So causality and correlation are very easily mixed-up because it’s easy for our brains to find easy links between facts. When we see that there is A and B, we think immediately of a cause – effect relationship between the 2. But we should stop and think that maybe while there is a correlation, one is not causing the other. They just go together.

In insights analysis this is a very common error. And often it goes well together with another error: attributing an insight to the wrong KPI, or better said: deriving the wrong insight from a KPI.

The same KPI tells different story depending on its contest. Sometimes two KPIs are correlated, sometimes there is a causality relationship. The avg. number of page impressions per visit causes the bounce rate to be higher or lower, because the more visits with one single page, the higher the overall bounce rate is. But page can have an high bounce rate and still be engaging. How often do you browse social media and come across an interesting article title, click it, read the article and then go back to the social where you came from? for the article’s site that is a bounce visit… but if you read the article you somehow reached your goal to inform yourself on that article’s content, so you were engaged with the content. So is this bounce visit a bad visit or a good one? It depends on the context, and in this case bounce rate should be analyzed coupled with an engagement rate to get a complete picture.